Music and language overlap: the latest review

Hello dear reader and a happy 2013 to you!I hope that you had a pleasant holiday season. I enjoyed visiting my family in both York and Zaragoza (Spain) and completely overate, as is right at this time of the year. Now a slightly chubbier version of me is sitting back in the office catching up on my reading.

One of the first things I picked up this week was a review article by Robert (Bob) Slevc on the shared and distinct processing mechanism for language and music. The review was so good I added it to my lecture on this subject and replaced my previous ‘essential reading’ for this class. This article is a highly accessible read so perfect for the students.

One of the first things I picked up this week was a review article by Robert (Bob) Slevc on the shared and distinct processing mechanism for language and music. The review was so good I added it to my lecture on this subject and replaced my previous ‘essential reading’ for this class. This article is a highly accessible read so perfect for the students.

I thought as well I would write a bit here about the main points covered. This will have the benefit of cementing the arguments in my mind and give you guys an idea of the contents. I certainly recommend that you read the original as well.

The article summarizes evidence for both overlap and distinct processing between language and music in three key areas: sound, structure and meaning.

![audio-sound-waves-img1[1]](http://musicpsychology.co.uk/wp-content/uploads/2013/01/audio-sound-waves-img11-150x150.jpg) Sound: The most obvious similarity is language and music are both complex human sound systems. Therefore, it makes sense that there is an overlap in processing during the early stages of the auditory system. At some point later joint the brain pathways of the two sounds appear to diverge in the brain, with speech processing relying more on structures in the left hemisphere and music on the right.

Sound: The most obvious similarity is language and music are both complex human sound systems. Therefore, it makes sense that there is an overlap in processing during the early stages of the auditory system. At some point later joint the brain pathways of the two sounds appear to diverge in the brain, with speech processing relying more on structures in the left hemisphere and music on the right.

One recent argument made by Robert Zatorre is that this split does not reflect specialization per se but rather shows off the specialist subjects of each hemisphere with the left being naturally better with rapid temporal processing (needed more of the time for speech) and the right preferring spectral discrimination (relatively more important in music). According to this view the hemispheres might just be biased towards particular characteristics of sound rather than specialized for language and music.

At the moment I like this argument as it leaves room for explaining how we process other types of sounds that we must process but that tend to get ignored in a comparison of music and language. What about animal or environmental noises, which we must have been hearing long before we invented speech or song?

At the moment I like this argument as it leaves room for explaining how we process other types of sounds that we must process but that tend to get ignored in a comparison of music and language. What about animal or environmental noises, which we must have been hearing long before we invented speech or song?

Although there is divergent processing of language and music at higher stages in the brain this does not mean there can’t be overlap here too. Bob discusses the evidence for music to language (e.g. musicians better at verbal tasks) and language to music transfer effects (e.g. speakers of tone language show some music related advantages such as higher rate of absolute pitch and more accurate memory for melody).

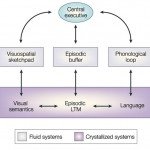

I am naturally biased but my top candidate for a shared process that links verbal and musical sounds is working memory. I spent years exploring recall of musical and linguistic sequences and I have seen how, especially in musicians, the two codes within memory can mesh, bind, and support each other. What I would like to better understand is the extent to which this is automatic/subconscious or whether it is a result of conscious strategies that facilitate music/language dual processing.

I am naturally biased but my top candidate for a shared process that links verbal and musical sounds is working memory. I spent years exploring recall of musical and linguistic sequences and I have seen how, especially in musicians, the two codes within memory can mesh, bind, and support each other. What I would like to better understand is the extent to which this is automatic/subconscious or whether it is a result of conscious strategies that facilitate music/language dual processing.

Structure: Language and music both have their own type of syntax or grammar. In order for a sentence or a melody to ‘make sense’ it needs to follow certain rules, otherwise it sounds like a messy jumble of words/notes.

Ani Patel hypothesised that although these rule systems differ, their integration probably demands a similar processing system. In other words similar processes are probably involved in implementing known grammar rules, online, as new notes/words arrive in order to make sense of the evolving melody/message. This shared syntactic integration resource hypothesis has received widespread support from both brain and behaviour studies, which are expertly outlined in the review.

This research represents an important step forward in understanding how the brain shares resources across the two sound systems; within a complex system like the brain as well as in the current world financial system, economy is important. The next step will be to work out which processes are really being shared and how – again, my vote goes for exploring working memory!

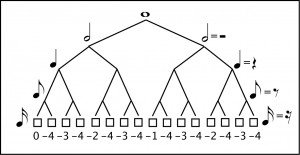

The review also covers overlaps in rhythmic structure. Rhythm also exhibits rich hierarchical structure and I would not be surprised if the processing systems that were involved in deciphering and predicting complex rhythms also sub-served elements of linguistic processing.

The review also covers overlaps in rhythmic structure. Rhythm also exhibits rich hierarchical structure and I would not be surprised if the processing systems that were involved in deciphering and predicting complex rhythms also sub-served elements of linguistic processing.

We see commonalities in the rhythms of some languages and their native music (e.g. French and English) and native language can impact on how people hear rhythms. This field is really in its infancy however and more causation work is needed.

Meaning: Finally Bob summarizes research into the similarities between meaning in music and language. This field has been brought to the fore recently following the publication of Stefan Koelsch’s book ‘Brain and Music’ which defines two categories of musical meaning that share characteristics with language; extramusical meaning (referring to something in the world; in music this is seen in tone painting and leitmotifs) and musicogenic meaning (emotional communication).

The most important thing I take from this review is that we are moving beyond the old arguments of modularity and into the promising are of exploring where, how and why pockets of music/language processing overlap exist. Narrowing these down will provide great deal of hope for understanding how music can aid verbal processing through life from learning through to preserving function when faced with illness, injury or age related decline.

The most important thing I take from this review is that we are moving beyond the old arguments of modularity and into the promising are of exploring where, how and why pockets of music/language processing overlap exist. Narrowing these down will provide great deal of hope for understanding how music can aid verbal processing through life from learning through to preserving function when faced with illness, injury or age related decline.

Thanks to Bob for this article. Anyone who would like a copy can contact him on slevc- at – umd.edu. Slevc, L.R. (2012). Language and music: Sound, structure, and meaning. Wiley Interdisciplinary Reviews: Cognitive Science, 3, 483-492. doi: 10.1002/wcs.1186

4 Comments

ian james slade

Dear Dr Vicky,

Firstly, I love the idea about your site.

I wanted to ask you if you knew any leads for my idea for a PhD project. Its based on the practical aspects of translating EEG frequencies into light and sound frequencies, in a real-time display, in order to create a neurofeedback loop. This I think could be done quite simply using sensors, circuitry and computer programming. The applications of such a real-time, sensory feedback loop based on electrical brain wave activity are far reaching, and have implications for therapeutic uses and research aims, exploring different electrical characteristics of thought processes, and trying to use the feedback loop to explore the possibilities of neurological programming and controlling a computer interface with the EEG input. All very exciting possibilities, I think.

I’m finding it very hard to get people to commit themselves to this project, given the cross disciplinary nature of it, I suppose. Do you know any institutions that you think would be a good fit for this project?

Thanks, and all the very best, Ian

vicky

Hi Ian

Sounds like a very interesting project. And something that may be of interest to some of my colleagues at Goldsmiths. I would suggest contacting Mick Grierson first of all and asking him if he is at all interested or knows someone who may be (http://doc.gold.ac.uk/~mus02mg/). Also, Professor Joydeep Bhattacharya, also at Goldsmiths (http://www.gold.ac.uk/psychology/staff/bhattacharya/).

All the best,

Vicky

ian james slade

Thank you so much.

Pingback: